Software teams rarely slow down because they lack talent. More often, work gets stuck between steps. Tickets sit in queues. Pull requests wait on the same senior engineers. Documentation falls behind the product and stays there until it becomes a problem. Over time, that friction starts to shape delivery more than the code itself.

That helps explain why AI has become a real focus in engineering. GitHub reported that developers using AI coding assistants completed some tasks up to 55% faster. It is a strong statistic, but speed is only part of what teams are looking at.

For engineering leaders, the question is not whether AI can help. It is where it actually improves the workflow, where it creates extra risk, and where human judgment still needs to stay firmly in place.

This article looks at how AI workflow automation fits into developer tasks, where teams are seeing real operational value, and where a careful approach still makes more sense than rushing ahead.

TL;DR: AI development workflow automation

- AI is useful in engineering work, but mostly for repetitive tasks.

- It helps most with code drafts, test cases, CI/CD checks, documentation, and post-release issue spotting.

- AI developer workflows still need experienced people behind them. AI can quicken up the process, but it cannot determine whether the result is actually correct.

- The weaknesses are well-known: risky code, superficial patches, unclear context, and developers who place too much trust in the result.

- A good AI dev workflow keeps review, ownership, and architecture decisions with the team.

- AI dev workflow automation works best when it removes small blockers instead of pretending to run the whole development process.

What is AI workflow automation?

AI workflow automation in software development means using machine learning models, LLMs, and smart agents to take over repeatable tasks or speed up work that used to depend on manual effort.

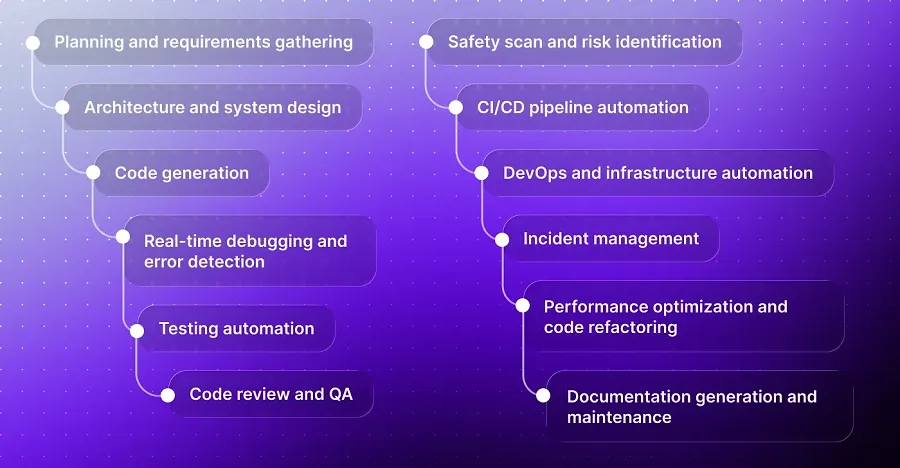

It can help with many parts of the development process, such as:

- turning a product brief into user stories

- creating unit tests

- checking pull requests for security risks

- flagging deployments that may cause issues

The AI-driven workflows spectrum is wide. At one end, sit AI-assisted IDE suggestions that a developer accepts or rejects. At the other end, fully autonomous agents, like Cognition's Devin, that can plan, code, test, and debug multi-step tasks with minimal human input. Most teams today operate somewhere in the middle, where the interesting trade-offs lie.

Benefits of AI-driven dev workflows

The strongest value from AI integrated into developer workflows does not appear across every team in the same way. It usually shows up where developers lose time on repeatable work, review gaps, or slow handoffs between tools.

Faster work on routine dev tasks

AI can create the first draft of code, tests, user stories, and technical docs. Developers still review the result, but they no longer start from zero each time. This is one reason AI developer workflows often bring the clearest gains in code-heavy teams.

More stable checks across large codebases

Manual review depends on time, focus, and context. AI-based checks can run the same way across every pull request, build, and release. This helps teams spot weak code, missed rules, and security risks before they move further down the pipeline.

Earlier bug and risk detection

A bug costs less to fix before release than after users find it. AI can review code changes, test results, logs, and deployment history to warn teams about risky releases. This is where AI development workflow automation can support QA, DevOps, and product teams simultaneously.

Faster knowledge transfer

New developers often lose days inside legacy code, missing docs, and unclear product logic. AI can explain code, summarize modules, and prepare draft docs for review. It does not replace senior support, but it gives junior developers a clearer first step.

Better use of senior developer time

Senior developers should not spend most of their day on repeatable review tasks, small fixes, or status updates. A strong AI dev workflow gives them more time for architecture, product logic, edge cases, and decisions that need experience. For teams with complex products, custom AI development can turn this into a practical system built around their real processes.

One point matters here: AI works best on top of clear requirements, clean architecture, and good test coverage. If the process is messy, AI will not fix it. It may only help the team move faster in the wrong direction.

AI use cases at every stage of the developer workflow

AI can support much more than code completion. Across the developer workflow, it can help

- clarify requirements

- plan architecture

- write tests

- review pull requests

- detect security risks

- keep documentation closer to the actual codebase.

The real value comes from using it where it removes repetitive work and gives engineers a better starting point, while keeping human review in place for decisions that affect quality, security, and system design.

Planning and requirements gathering

To write code, AI analyzes user comments, product briefings, and ticket backlogs. It identifies trends, highlights conflicting needs, and builds basic user stories. Linear and Jira have both rolled out AI assistants that suggest issue descriptions, estimate ticket complexity, and flag duplicates across the backlog.

The practical value: surfacing ambiguity early. When a feature brief is unclear, an AI-driven prompt can identify the gap before it turns into three weeks of rework.

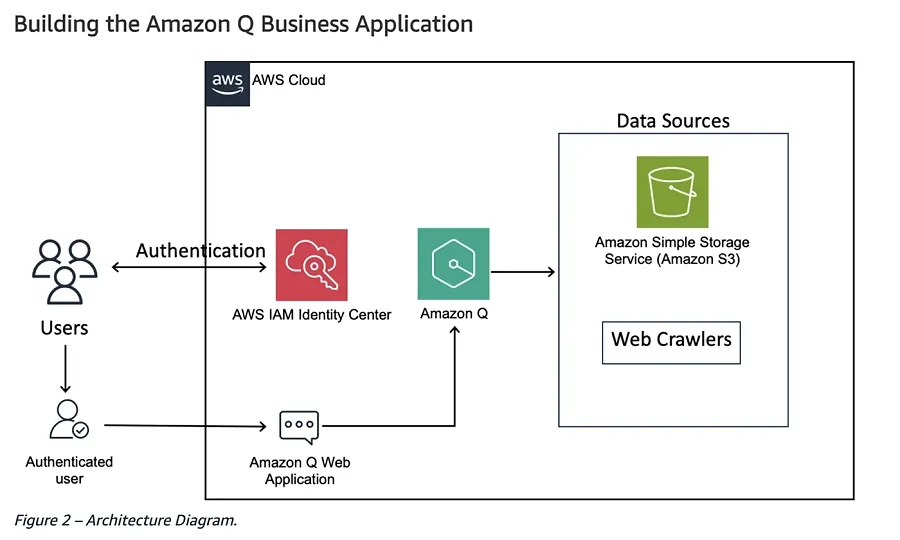

Architecture and system design

AI can generate architectural diagrams, suggest design patterns, and flag scalability concerns based on projected load. Amazon Q Developer, the AI assistant embedded in AWS tooling, can propose service-level architectures for AWS-native applications from plain-language descriptions.

This doesn't replace a senior architect. It accelerates the early scaffolding phase and gives less-experienced engineers a more structured starting point for review.

Code generation

This is where AI developer workflow adoption is highest. GitHub Copilot, Cursor, and Tabnine are among the most common tools teams use for AI-assisted coding. They read the context around the work, open files, past changes, and code comments. After, they suggest anything from small code completions to full functions or changes across several files.

Real-time debugging and error detection

AI-powered debuggers embedded in JetBrains AI Assistant and Cursor can trace error roots across call stacks and suggest fixes inline. Platforms like Rookout apply AI to surface live debugging data without requiring code changes or redeployment.

The leverage is most visible in distributed systems, where tracing an error across microservices manually can absorb hours. AI tools can correlate logs, spans, and exceptions across services in seconds.

Testing automation

Writing tests is one of the tasks developers most reliably skip under deadline pressure — and one of the most expensive to skip. Qodo (formerly CodiumAI) generates meaningful unit and integration tests by analyzing code behavior, not just structure. Diffblue Cover does the same for Java codebases, producing full JUnit test suites automatically.

As an example, Deutsche Bank's engineering team used Diffblue Cover to cover more than 700K lines of legacy Java code. Otherwise, this project would have taken years by hand.

Code review and QA

AI-powered workflow automation in code review goes well past linting. Tools like CodeRabbit and SonarQube's AI features analyze pull requests for logic errors, style violations, performance anti-patterns, and security issues. Then they write review comments in plain language.

The efficiency gain is genuine, but so is the risk. AI reviewers occasionally flag false positives with high confidence. The most effective setups treat AI review as a first pass, not a replacement for human judgment on architectural decisions.

Safety scan and risk identification

Security is one area where AI already feels useful in everyday development work. Snyk, for example, checks code, containers, infrastructure-as-code, and open-source packages while developers build. When it finds a problem, it does more than flag it gives the team a practical path to fix it.

GitHub Advanced Security, or GHAS, works closer to the repository itself. It helps teams spot exposed secrets, review dependencies, and catch risks before they move further into the development cycle.

CI/CD pipeline automation

GitLab Duo and AI-extended GitHub Actions can auto-generate pipeline configurations, identify flaky tests, and predict which changes are likely to cause build failures based on historical patterns. Harness AI goes further, using ML to continuously optimize pipeline performance and flag anomalous build durations.

For teams that manage dozens of microservices, intelligent CI/CD makes deployments easier to follow and control.

DevOps and infrastructure automation

HashiCorp has started adding AI capabilities to Terraform, giving teams help with writing, explaining, and fixing infrastructure-as-code. Pulumi AI takes a different route. Developers describe what they need in plain language, and it creates full infrastructure stacks in TypeScript, Python, or Go.

One risk needs to be stated plainly: infrastructure mistakes rarely fail gently. An AI-generated Terraform plan that accidentally deletes a production database is not the kind of problem anyone wants to face at 3 AM. Human review of AI-generated infrastructure code remains essential, no matter how confident the model sounds.

Incident management

PagerDuty AIOps and Datadog’s Watchdog use machine learning to connect related alerts, reduce noise, and point teams toward likely root causes during outages. Instead of making engineers work through hundreds of alerts manually, AI helps narrow the issue to the most likely source and suggests the next runbook steps.

Performance optimization and code refactoring

AWS CodeGuru Profiler finds performance bottlenecks in live applications and recommends code-level fixes. Sourcegraph Cody can refactor entire modules from natural language instructions, with consistent changes across a codebase that would take weeks to update manually.

Documentation generation and maintenance

Documentation is the perennial casualty of sprint pressure. Mintlify and Swimm generate and maintain documentation by reading actual code. When the code changes, the docs can update automatically. Microsoft's Copilot for Docs lets teams query their documentation conversationally.

The quality of AI-generated docs depends directly on code quality. Poorly named functions and missing inline comments give the model little signal to work with.

Common challenges of AI-driven workflows

When companies focus on AI development across workflows, the same problems tend to show up early. It's worth knowing them before choosing tools or rolling them out across the team:

- Code quality drift. AI-generated code often appears syntactically correct but structurally weak. Without a robust review and stated quality guidelines, technical debt builds more quickly than with human development.

- Expanded security surface. Adding AI to a modern development workflow also adds risk. These tools often sit close to source code, repositories, and sometimes even production environments. That means the review process cannot stop at the code they generate. The tools themselves need the same level of security scrutiny as any other system with sensitive access.

- Over-reliance and skill loss. AI tools can make developers too dependent on shortcuts. If a junior engineer lets the tool write the test, explain the bug, or suggest the fix, they may get the answer without learning how to reach it. Over time, that creates a real problem. Reading code, checking assumptions, debugging, and making technical decisions are skills that need regular practice.

- Cost and licensing complexity. Enterprise AI development costs aren't cheap. GitHub Copilot Enterprise, Snyk Business, and Datadog's AI features each carry meaningful per-seat costs. ROI calculations should account for the full stack of tools, not individual tools in isolation.

- Integration overhead. Most AI tools promise quick setup. In real projects, these tools rarely plug in as easily as they look in a demo. Teams still have to connect them to CI/CD pipelines, IAM, access rules, and compliance processes. That work takes engineering time, and many companies don’t budget for it properly at the start.

DigitalSuits' insights about the workflow of the modern developer

At DigitalSuits, AI development and Generative AI integration are already part of the workflow of the modern developer, but not as a replacement for engineering. Its value is practical, it helps with estimates, keeps project standards close to the code, reduces manual handoffs, and gives teams a stronger first draft to review.

Internal estimation bot for faster project assessment

One example is DigitalSuits' internal Estimation Bot, a CLI tool that helps assess web project development time from different types of input:

- a Figma layout

- an existing website URL

- a written project brief

Based on that input, the bot creates a ready Excel estimate in DigitalSuits' internal format. The table is broken down by pages, sections, and expected development time, so the manager receives a structured starting point instead of a rough manual guess.

The bot also analyses the source material before it creates an estimate. It can:

- parse websites through sitemap and navigation

- identify Shopify sections

- pull pages and components from Figma through the REST API

- send each section to Claude for complexity assessment and time estimation

The key detail is that the estimate stays editable. Team coefficients for QA, project management, code review, and risk buffers are shown as Excel formulas, not fixed, hidden numbers. A manager can adjust any multiplier, and the full estimate recalculates automatically.

That is where AI-powered workflow automation works best, AI prepares the base, while the final decision stays with the person responsible for the project.

The Estimation Bot is also connected to RingCentral. This means managers and non-technical team members can request an estimate directly from chat, without opening the terminal. The stack behind the tool includes Node.js, TypeScript, Anthropic API, ExcelJS, and Figma REST API.

Cursor and Claude code setup for Shopify development

Another part of DigitalSuits' internal setup is the Cursor and Claude Code configuration for Shopify web development. It includes rules, slash commands, skills, and specialized agents that help standardize daily work across the team.

Rules work as context for the AI assistant. Some are always active, such as:

- git workflow

- code style

- task management

- team development standards

Others load depending on the file a developer opens. For example, when a Liquid section file is open, Cursor receives context about BEM, Online Store 2.0, and Shopify schema structure.

When an app file is open, the context switches to Remix and Polaris. As a result, AI writes code closer to the team’s standards from the start.

This is the practical side of AI dev workflow automation, the assistant does not just generate code, it works inside the team's real rules and project structure.

Slash commands that support the full task cycle

DigitalSuits also uses slash commands to support the full task cycle:

- /create-prompt turns a short task description in Ukrainian, Russian, or English into a detailed prompt

- /create-branch creates a branch based on task.md

- /create-pr runs theme check and prepares a pull request

- /qa-handoff creates a clear message for the tester

Each action still needs confirmation. This keeps the developer in control while removing repetitive steps from the process.

Task.md as the main source of project context

The task.md file works as the main source of task context. It stores the ClickUp task ID, Figma links, task description, and acceptance criteria. Branches, commits, pull requests, and QA messages all use the same file, helping keep the task tracker and Git aligned.

Specialized AI agents with clear access levels

DigitalSuits also uses eight specialized agents with clear roles and access levels. Some agents can work directly with the code, while others only review and report.

- Frontend developers, Shopify developers, and pixel-perfect agents have full code access.

- QA engineer and section architect work in read-only mode.

- Validator agents can check the work, but they cannot change the code they review.

That separation is intentional. A tool that validates code should not also rewrite it.

Reusable skills for recurring development tasks

The team also uses documented skills for recurring development tasks, including:

- extracting data from Figma

- creating Shopify sections according to team standards

- setting up speed improvements

- checking accessibility against WCAG 2.1 AA

- testing responsive behavior across breakpoints

These are not one-time prompts. They are reusable workflows that help developers repeat the same process with more consistent results. They also make onboarding easier because new team members can follow the same patterns already used by experienced developers.

What this says about AI in developer workflows

The benefits of AI dev workflow automation are strongest when AI fits into the engineering process instead of sitting outside it.

For DigitalSuits, that means faster estimates, clearer task context, fewer manual handoffs, better standardization, and stronger support for Shopify development teams.

For companies that want to apply the same practical approach to their own products or internal tools, DigitalSuits offers AI development services focused on real business and engineering use cases.

The bottom line

AI development workflow automation has moved from experiment to standard practice. The productivity gains are real, but they're proportional to how thoughtfully the tools are chosen, governed, and reviewed.

The teams that get the best outcomes do not generally have the most AI tools. They’re the ones who've been deliberate about which stages of the modern developer workflow benefit most from automation, who maintain oversight over AI output, and what "good" actually looks like before any model enters the picture.

If you want to apply AI to your development process with the same level of control and practical value, contact us. DigitalSuits can help you define the right use cases, build custom tools, and integrate AI into your existing workflow.

Frequently asked questions

Can AI workflow automation work for small engineering teams?

Yes, and smaller teams sometimes benefit more. A two- or three-person team that applies AI for testing, code review, or documentation effectively multiplies its capacity without additional members. The main consideration is personal licensing costs relative to team size.

What do I need to automate first?

Begin by identifying the areas where your team wastes the most time or makes the most mistakes. Usually, that's either testing or code review. Automating the highest-friction point first gives the clearest signal on whether the investment is working.

Can AI tools introduce new security vulnerabilities?

Yes. AI-generated code has been shown to reproduce insecure patterns from training data. However, you can use a dedicated security scanner, like Snyk or GitHub Advanced Security.

How is AI-assisted development different from AI-automated development?

AI-assisted development means AI suggests or drafts output that a human reviews and approves. AI-automated development means AI executes tasks with minimal human involvement. Usually, teams operate in assisted mode today. Autonomous agents like Cognition's Devin represent the automated end of the spectrum. They also require more mature governance and oversight frameworks before they're production-ready.

Was this helpful?

0

No comments yet